By John Romeo

When we look back at historical turning points — the Industrial Revolution, electrification, the birth of the internet — they appear clean, linear, and inevitable. Having changed the course of history, they now seem as though they always would.

That is not how it felt at the time. In the moment, there were multiple plausible futures, competing explanations, false starts, bubbles, and disappointments.

For leaders living through such periods, the hardest question is not “What’s happening?” but “Will it change the world?” They must decide amid uncertainty — and the cost of getting the timing wrong is asymmetric. Overreacting wastes capital and credibility. Underreacting risks irrelevance.

We see this play out in today’s debate about artificial intelligence. Many argue that we are on a path to artificial general intelligence, perhaps in just a few years. That view is amplified by media narratives that cast AI CEOs as singular visionaries presiding over a civilizational shift.

When I hear these voices, I’m reminded of the advice a mentor gave me early in my career: Listen carefully to what people say — then ask what would have to be true for them to say it. Look past the narrative. Follow the incentives. Understand who is talking their own book.

Today’s AI maximalists may be right, but it is impossible to ignore the incentive structure behind their claims. Leading AI companies are spending hundreds of billions of dollars to build data infrastructure and train models. To deliver a risk-adjusted return to investors on such vast sums, the technology can’t be merely useful — it needs to be transformational.

That is what makes the current moment so hard to read. The same incentives that could exaggerate AI’s impact to raise money also exist in a world where AI genuinely is the most important technology humanity has ever built. Noise and signal coexist — and they look remarkably similar.

So how might we tell whether this really is one of those moments? History suggests that transformative technologies reveal themselves not through bold claims, but by crossing a small number of hard-to-fake thresholds:

- Sustained capital intensity. When progress depends on continuous, massive investment — and involves governments, not just firms — a technology begins to resemble infrastructure rather than a product.

- Organizational strain. When productivity rises faster than organizations can manage it, decision-making becomes the bottleneck, and leaders grapple less with adoption and more with control, structure, and governance.

- Labor repricing. The signal is not job losses but faster erosion of the link between skills, credentials, and reward — including high-status, white-collar roles. AI does not create this dynamic; it accelerates one already underway.

- Governance gaps. These arise when technology progresses to a point where existing institutions are inadequate, and firms find themselves making decisions with societal consequences by default.

If these signals accumulate, the question of whether AI delivers utopia or disappointment becomes almost secondary. The inflection already has started.

Which brings us to today’s real leadership challenge. If AI turns out not to be the civilizational rupture some predict, the cost of thoughtful engagement will be limited. But if we are on the cusp of a profound transformation, the cost of disengagement will be measured not just in competitiveness but in trust, legitimacy, and social cohesion.

Leaders in this moment need to exercise judgment under ambiguity — resisting both hype and complacency, and acting with proportion rather than paralysis.

The responsibility now is not to predict the future, or fear it. It is to engage early, govern deliberately, and remember that technological power does not remove human choice — it intensifies it.

John Romeo is CEO of the Oliver Wyman Forum

Few things stir as much hope and fear in corporate C-suites as artificial intelligence. Hopes of AI-led transformation drove gains in tech stocks for much of the past year, but concerns about massive investment in AI data centers helped knock $1 trillion off the value of six leading US AI plays in one week earlier this month.

Technological shifts inevitably generate uncertainty about how they will pan out. Yet AI is a unique technology that has been field tested by hundreds of millions of people around the world. Our 300,000 Voices project, a five-year study of how people are reshaping how they shop, invest, work, and live, shows why people are embracing AI, and how companies can use it to deepen customer relationships.

Nearly half of retail investors are using AI for financial planning and advice, with usage growing by 33% between 2023 and 2025. And close to two-thirds of consumers use it to find and purchase new products, making shopping the most popular use case outside of work. That creates an opportunity for retailers and financial institutions to embed AI into the product experience to get to know customers better and provide customized goods and services.

Make AI part of the investing team

Since 2022, financial independence has jumped to the top spot of skills that people wish they had learned more about growing up. Consumers today are worried about their finances, and the proportion of people who feel pressure to make money to be successful jumped by 80% between 2022 and 2025.

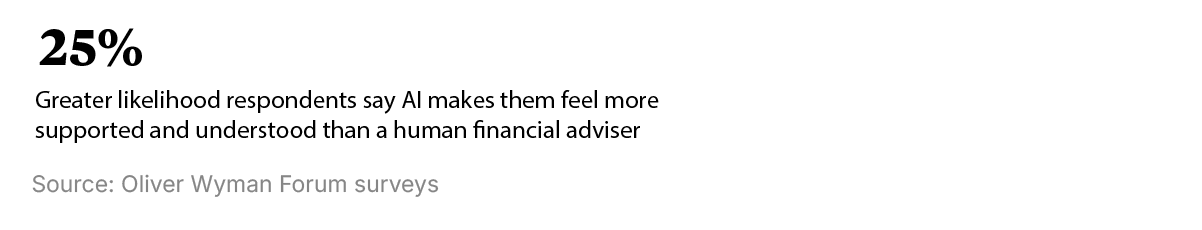

These are classic signs of unmet needs, and the early evidence is that people are eager to employ AI to fulfill them. Forty-four percent of people reported using AI for help with financial advice and planning in 2025, up from 34% two years earlier. Many investors feel AI understands their situation better and is less judgmental than human advisers. Yet it’s notable that the use of financial advisers by retail investors also has risen from 36% in 2021 to 43% in 2025.

Financial institutions should take these findings as an opportunity, not a threat. They can combine human insight and emotional support with powerful digital tools, so customers feel they’re learning to navigate markets, not being sold to. AI also can expand the potential market by making it cost-effective to serve more consumers with personalized advice.

Use AI to empower, not target, shoppers

It's no surprise that so many consumers (62%) are using AI for shopping. People have well-developed digital habits, having embraced everything from online commerce to social media influencers. AI takes the convenience and range of e-shopping to the next level, matching a consumer’s personal needs and desires with the specific products or services that fulfill those needs. That may explain why the percentage of consumers who say brands create products and services that meet their needs rose by a third between 2022 and 2025.

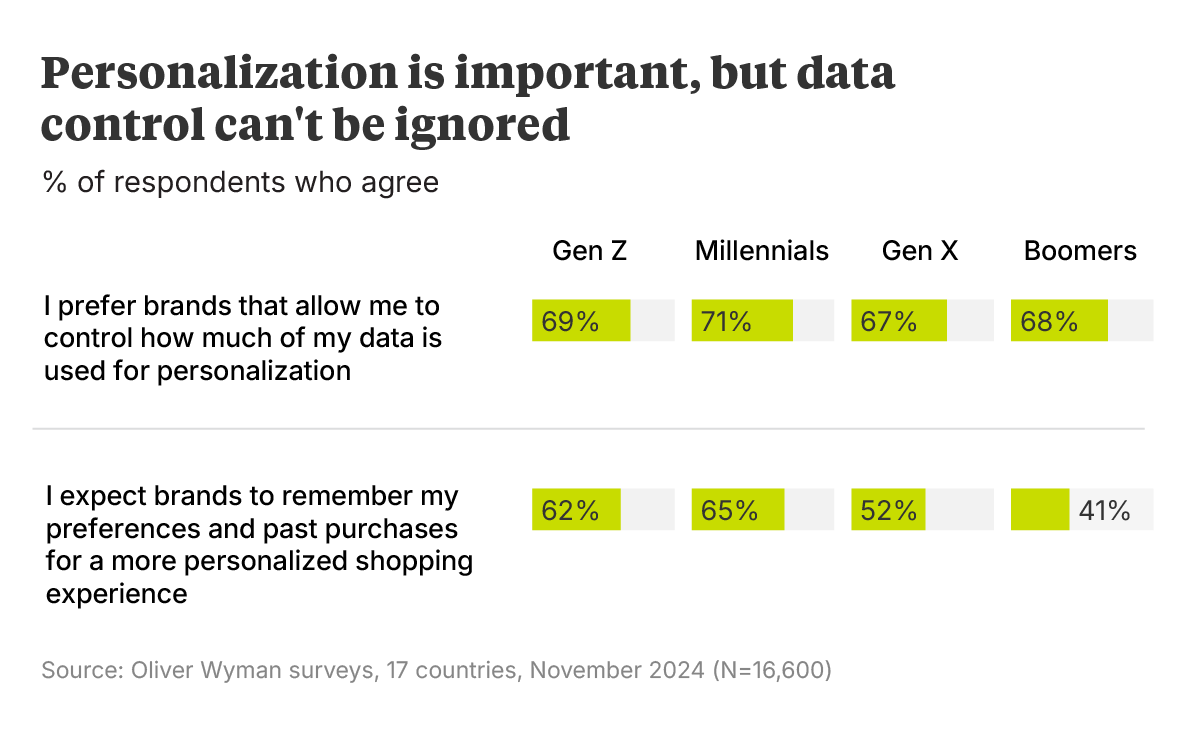

The problem for vendors is that rising satisfaction increases consumer expectations and weakens loyalty. Three in five consumers will ditch a brand after a single poor experience, with high-income shoppers the most likely to shift allegiance. People also want a say in how companies make their recommendations, with 69% demanding greater control over how much of their data is used for personalization. And they’re tired of digital targeting: “Too many ads” is the top complaint people have about social media, cited by 45% of respondents.

The takeaway for brands? Instead of targeting consumers, build personalization that customers control so it feels like a tool they wield instead of a system that wields them. Just over half of respondents say they would use AI to curate personalized lists of products based on preferences they provide. Brands also can use AI tools to improve customer retention by looking at repeat purchase patterns, complaints, and signals that buyers might be drifting away.

Markets may swing between hype and doubt, but customer behavior is already clear: People want AI to help them decide. Build for that reality and you’ll earn attention, trust, and loyalty.

Facing a more aggressive Russia and a less supportive United States, European nations have pledged to more than double defense spending by 2035. But countries need to align their priorities and collaborate more closely for that money to produce a real step change in defense capabilities, according to a new report from the Milken Institute and Oliver Wyman.

Defense spending in Europe traditionally has been driven by national priorities and industrial capabilities, resulting in a fragmentation of efforts. Europe has two competing multinational partnerships working to develop a next-generation fighter jet, for instance, while EU countries field 17 different battle tanks compared with one for the United States.

What’s needed is a European vision that coordinates readiness at a continental level and involves non-EU allies such as the United Kingdom and Norway, the report says. This approach should align national efforts around common standards and concentrate investment in a smaller number of weapons platforms.

The European Union last year laid out a defense readiness agenda, ReArm Europe 2030, that set targets for a greater European share of defense procurement and more joint ventures. That collaboration needs to accelerate, and governments need to work more closely with industry and investors to achieve those targets, the report says.

The report proposes the creation of a government-sponsored fund of funds to finance European defense start-ups and a “defense accelerator” to help scale up small and medium-sized firms. It also calls for public-private collaboration to finance contingent capacity that can ramp up defense production in a crisis. Greater financing is critical because Europe must grow its defense industrial capacity by 70% to meet its needs.

A successful rearmament effort is important not only for European security but also for stimulating a sluggish economy. European rearmament efforts are projected to drive more than $110 billion in aircraft deliveries between 2025 and 2032, according to Oliver Wyman’s Global Military Aircraft Fleet And Sustainment Outlook.

A selection of smart reads on business, technology, geopolitics, culture, and beyond.

- India May Be About To Become One Of The World’s Most Open Economies. Recent trade deals with the European Union, United Kingdom, and United States could make India a manufacturing powerhouse, says economist Arvind Subramanian.

- An Agent Revolt: Moltbook Is Not A Good Idea. An open source AI agent takes the tech world by storm, and within days agents meeting on their own social network create a religion and debate how to hide their activity from humans. “From a capability perspective, OpenClaw is groundbreaking,” one tech firm’s security team said. “From a security perspective, it’s an absolute nightmare.”

- See also: Five Ways Of Thinking About Moltbook.

- AI Doesn’t Reduce Work — It Intensifies It. For many, the promise of AI is to relieve humans of repetitive work, enabling them to focus on high-value tasks. The reality at one US tech firm was increased workload and the risk of burnout.

- Geopolitics In The Age Of Artificial Intelligence. US officials should not frame policy around a single vision of AI’s future, according to an article co-authored by Jake Sullivan, former National Security Advisor to President Joe Biden. Instead, they should explore scenarios around three key questions: Will AI accelerate to superintelligence or plateau? Will breakthroughs be easy or hard to copy? And is China racing to win the frontier or positioning itself to fast follow and commoditize?.

- How Private Equity’s Big Bet On Software Was Derailed By AI. Software companies accounted for some 40% of deal activity by private equity firms over the past decade. Now the PE industry could be facing a “Darwinian moment” because of AI’s coding abilities and shakeout in software valuations.

- The Singularity Is Always Near. There’s a popular notion that accelerating technological progress is hurtling humanity toward a singularity, akin to entering a black hole, where the future is unknowable. A broader perspective shows that the singularity has been near since the dawn of time, and always will be, argues writer and Wired co-founder Kevin Kelly

Every month, we highlight key data points drawn from more than five years of consumer research.